This project demonstrates an end-to-end Microsoft Fabric implementation, covering workspace setup, Lakehouse creation, and data ingestion using CSV and JSON files with Delta tables and Dataflow Gen2. It includes querying data through the SQL Analytics endpoint, building visual queries, managing shortcuts, and configuring access, permissions, and sharing. The project also integrates OneLake File Explorer, SSMS, and Power BI Desktop to enable a complete analytics workflow from data storage to visualization.

Part 1

*

This guide is designed to be followed in sequence:

Part 1 - Fabric Data Engineering & Analytics Pipeline

Part 2 - Fabric Data Modeling & Report Deployment Guide

*

All files used in this project are located here

*

Task Details

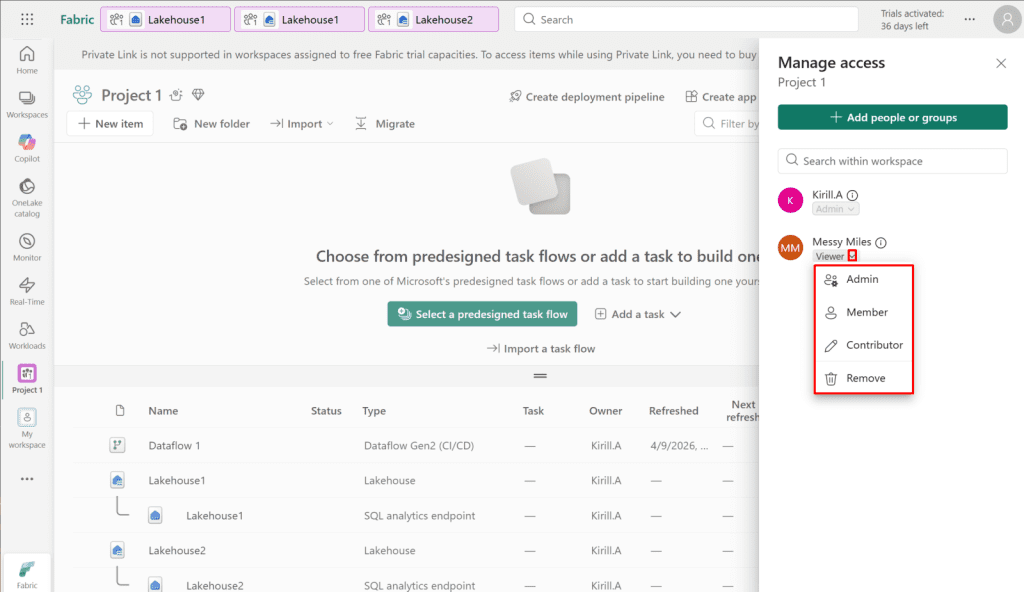

1. Sign up to Microsoft Fabric with work account.

2. Microsoft Fabric pricing.

3. Create a Microsoft Fabric workspace.

4. Create a Lakehouse within your Fabric workspace.

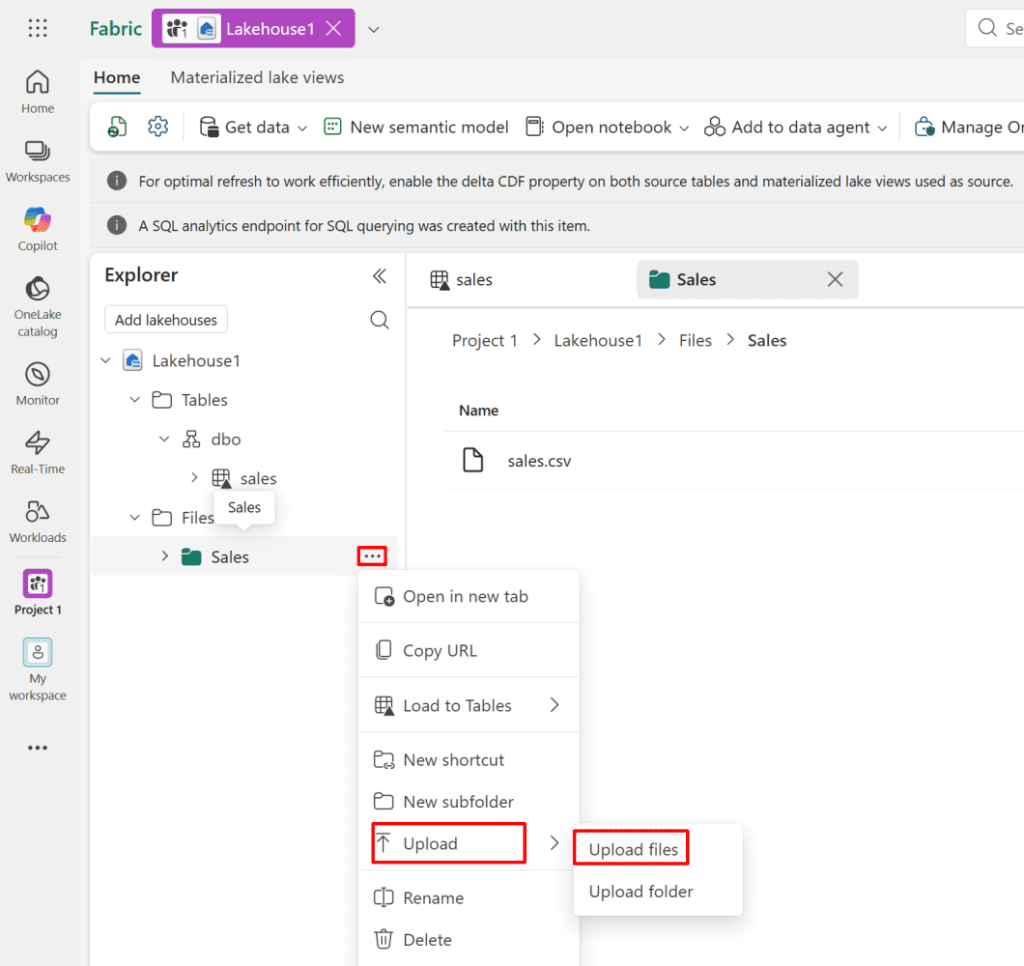

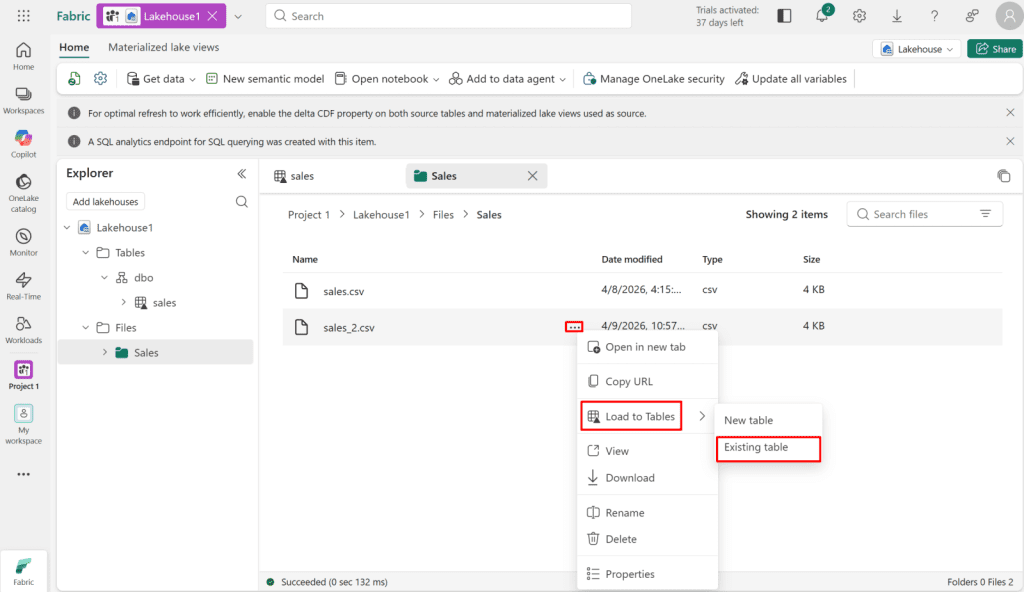

5. Load and append CSV data into Delta tables.

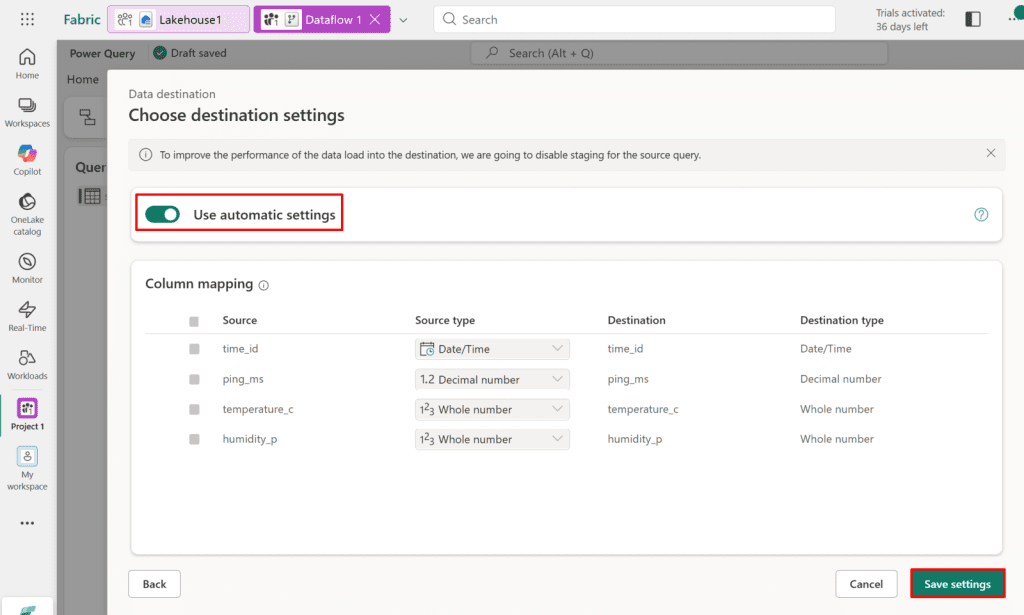

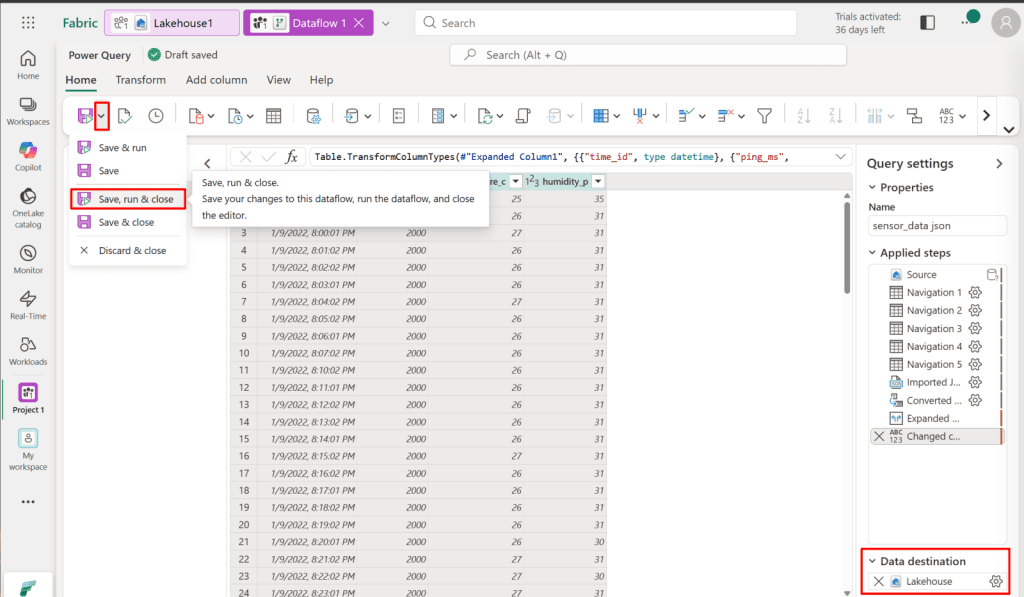

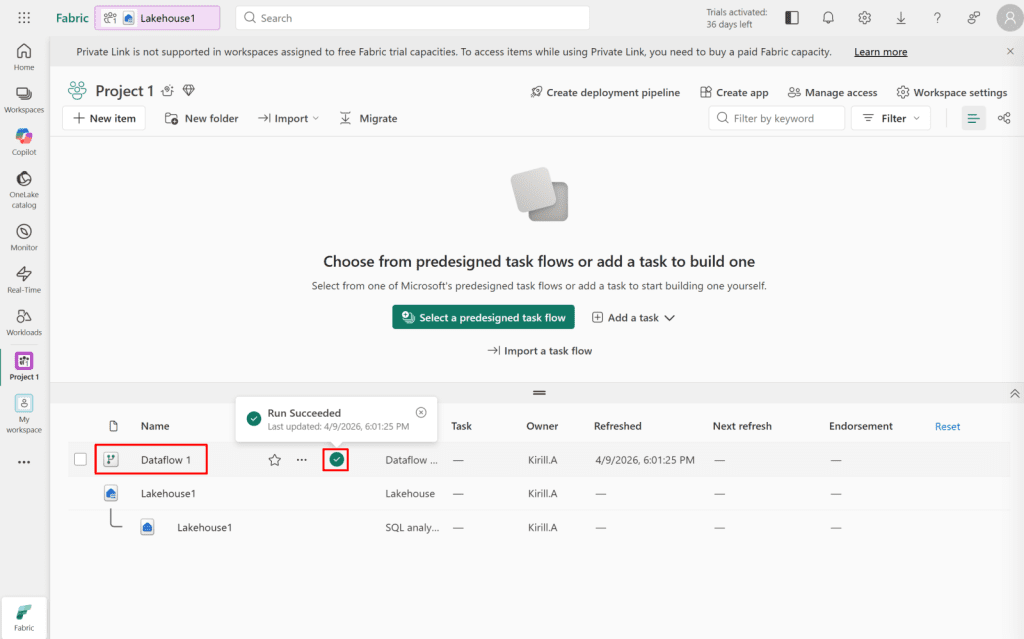

6. Load JSON data into Delta tables using Dataflow Gen2.

7. Query data using the SQL analytics endpoint.

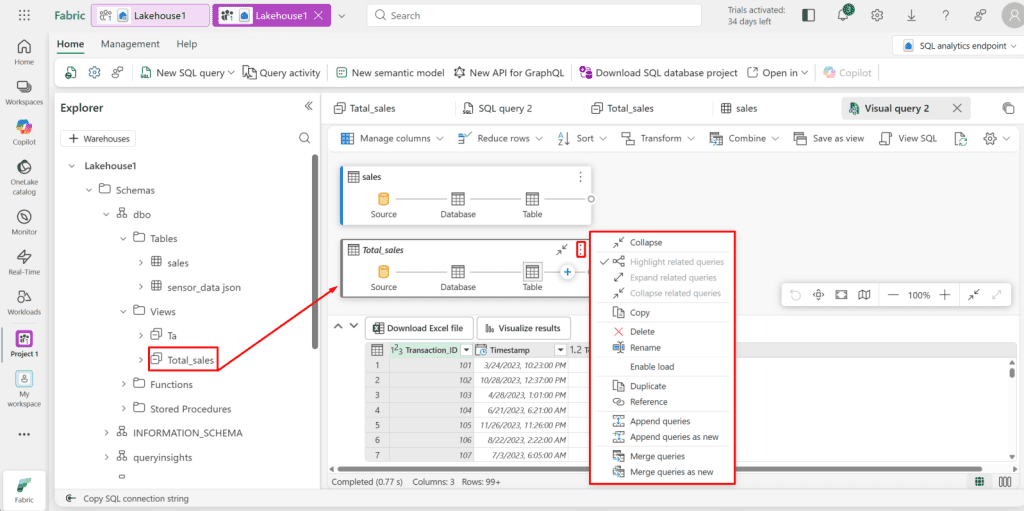

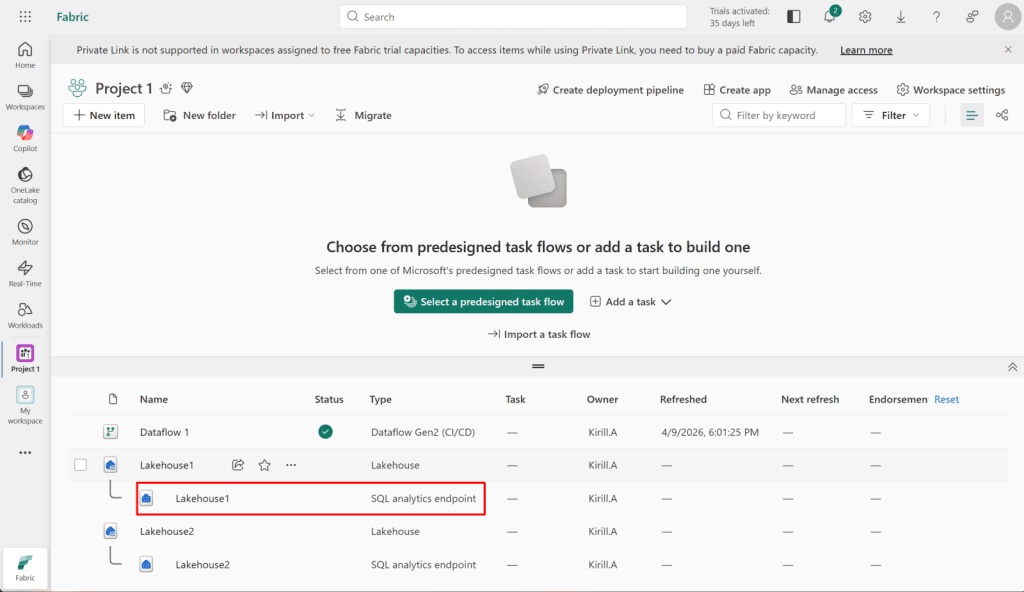

8. Create a visual query.

9. Create shortcuts.

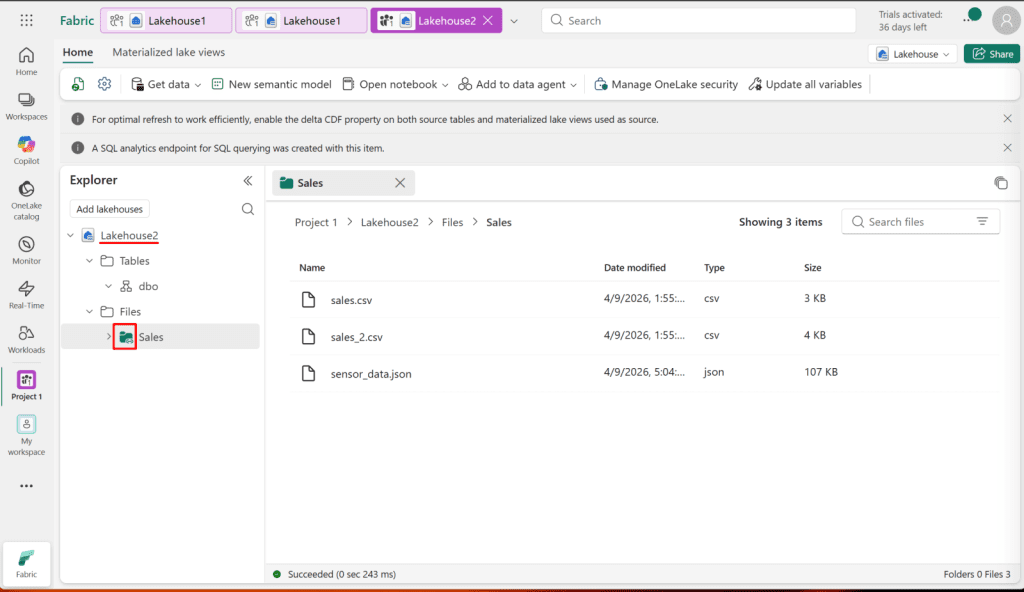

10. Manage access, permissions, and sharing.

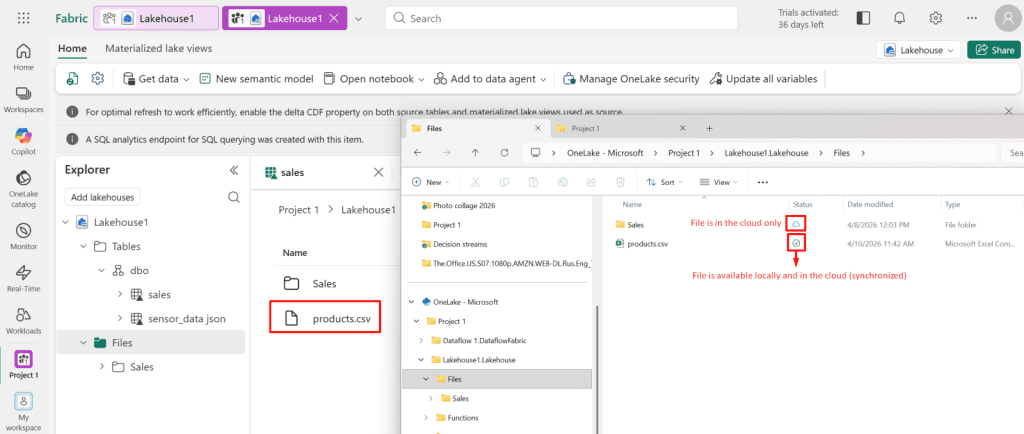

11. Sync Your Files with OneLake File Explorer to Your Workspace.

12. Connect SSMS to a Fabric Lakehouse SQL Analytics Endpoint.

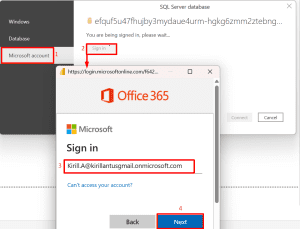

13. Connect Power BI Desktop to a Fabric Lakehouse SQL Analytics Endpoint.

*

Steps

Sign up to Microsoft Fabric with work account

Any Microsoft Entra ID account that exists in a Microsoft 365 tenant can access the Power BI /Fabric Service, as long as:

- The account has a Work or School account type (all Entra ID accounts in your tenant automatically are).

- A Microsoft Fabric / Power BI license determines the level of access:

- Free → personal use (My Workspace only)

Pro / Premium Per User → sharing, collaboration, workspace access

Here’s how it works:

- Entra ID account → identifies the user in your tenant.

- License assignment → tells Power BI what features the user can access.

- Microsoft Fabric → allows the account to log in, access workspaces, refresh datasets, and create reports.

Note: You can activate a free 60 days Microsoft Fabric Pro trial to test all features, including report creation, sharing, and workspace collaboration, before purchasing a license.

Note: You must use a Work or School account to create and use a Microsoft Fabric service account. Personal email accounts (such as Gmail or Outlook.com) are not supported in Microsoft Fabric Service. The account must be created within your organization’s tenant in Microsoft Entra ID and assigned an appropriate Microsoft Fabric license.

Note: Although a Microsoft Fabric Service sign-in is possible using a Global Administrator account, it is not recommended to use Global Admin accounts for Microsoft Fabric automation or service tasks. Instead, create a dedicated Work or School service account in Microsoft Entra ID and assign only the required Microsoft Fabric license and workspace permissions. This follows the principle of least privilege and improves security.

*

2. If free trial is not available, go to admin portal and enable “Users can create Fabric items."

*

*

3. In Microsoft Fabric home screen, featuring options to create new workspaces using task flow templates and access various data analytics tutorials.

*

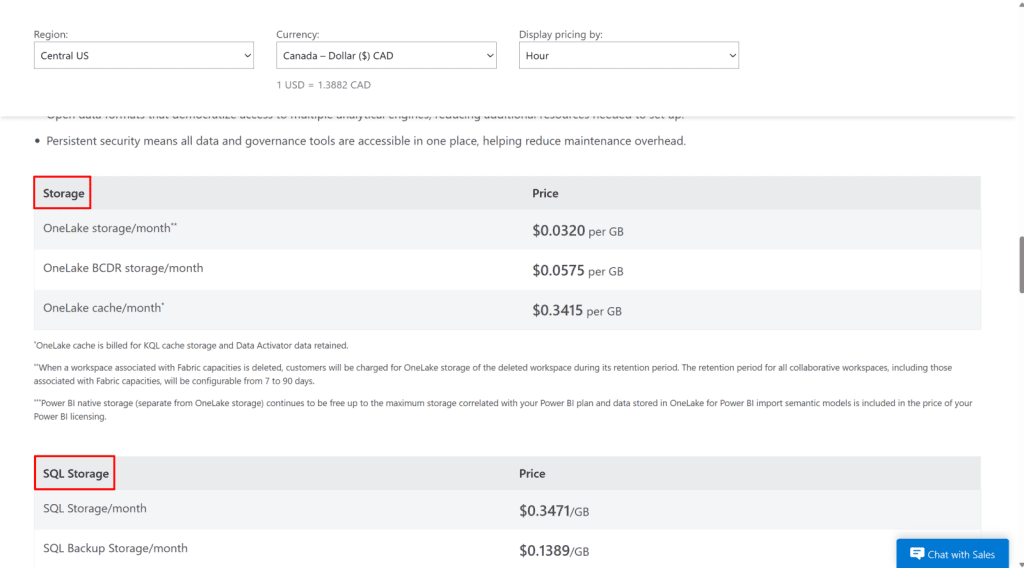

Microsoft Fabric pricing.

Microsoft Fabric pricing helps you understand the costs associated with using Fabric, including the free trial, storage, compute, and advanced features, so you can plan your project accordingly.

*

Microsoft Fabric costs are made up of several key components:

- User Licenses – Users need Power BI Pro or Premium Per User (PPU) licenses to create, share, or collaborate on reports and dashboards. Free users can only view content in capacity-backed workspaces.

- Capacity (SKU) License – Defines the compute and memory allocation for a workspace. SKUs (like F64) determine performance and available features.

Storage – Charges apply for data stored in your workspace, including datasets, files, and other assets. - SQL Storage / Compute – Using Fabric SQL endpoints or dedicated storage for large datasets may incur additional costs depending on size and performance tier.

- Bandwidth (Data transfer) – Intra-region traffic (within the same Azure region) is usually free. Inter-region traffic or data sent to the public internet may incur charges based on Azure’s bandwidth pricing.

Quick Notes:

Power BI Pro or Premium licenses are required for users, as Microsoft Fabric relies on Power BI for sharing, collaboration, and report publishing.

In short, users need the appropriate license to access and interact with Fabric data and reports.

Fabric capacity is a pool of compute and storage resources assigned to a tenant. Each tenant starts with a default capacity that can be shared across workspaces, and additional capacities can be added to scale performance or isolate workloads.

A SKU (Stock Keeping Unit) defines the pricing tier and feature set of a Fabric capacity, determining the level of performance and capabilities available.

In short, the main cost components in Microsoft Fabric are user licenses, capacity SKU, storage, SQL resources, and bandwidth.

*

*

*

*

*

Create a Microsoft Fabric workspace.

A Microsoft Fabric workspace is the foundational environment where you create, manage, and organize your data, analytics, and integration resources. In this step, you will create a new workspace to begin building and securing your Fabric solutions.

Note: A workspace is like a folder or container where you keep all your project resources.

1. In the Workspaces tab, click New workspace.

*

2. Give your project a name and description.

*

3. Scroll down and select Fabric Trial to access all the features available in the free trial.

- Click Apply

*

4. Now you can create new items in your workspace.

*

5. One of the biggest advantages is that we can now create and manage Microsoft Fabric, Power BI, and other tools seamlessly under the same platform. This unified experience not only simplifies workflows but also makes data management, visualization, and analytics feel truly integrated and effortless.

Note: You can navigate between Fabric, Power BI, and other resources by clicking the button in the bottom-left corner and selecting the desired view.

*

Create a Lakehouse within your Fabric workspace.

A Lakehouse in Microsoft Fabric is a unified storage and analytics platform that combines the flexibility of a data lake with the structure of a data warehouse. Creating a Lakehouse allows you to store, manage, and analyze both structured and unstructured data in one place.

*

1. In your project, click + New and select Lakehouse.

*

2. Enter a descriptive name for your Lakehouse and click Create.

Note: A SQL Analytics endpoint is created automatically with your Lakehouse. It’s your direct connection to run SQL queries on the Lakehouse data, perfect for analysis with Power BI, Excel, or Fabric notebooks without moving any data.

*